I’ve been thinking about this topic alot and I think I have a good rundown with how the five senses in macroscopic stage may work.

Sight

There hasn’t been much talk about it but from what I’ve heard some people suggested to have your creature’s Sight blured, which I vehemently disagree with. I hate when things are blured, they make my eyes water, so instead if you’re creature has bad eyesight things look simpler, I was thinking closer to this style at it’s simplest https://www.youtube.com/watch?v=jlKNOirh66E probably even more simple. While with better eyesight it may look more like this Macroscopic editor concept art - #2 by Mr_42. But having no eyesight will result in pitch black surroundings.

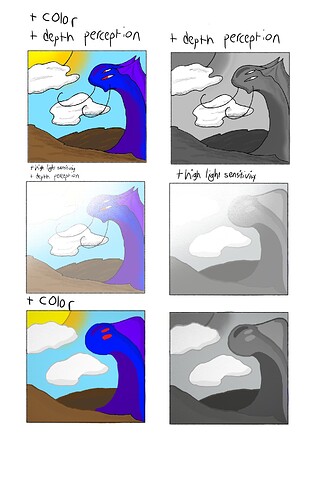

Eyesight could scale in three separate way’s: color spectrum, depth perception, and eye sensitivity.

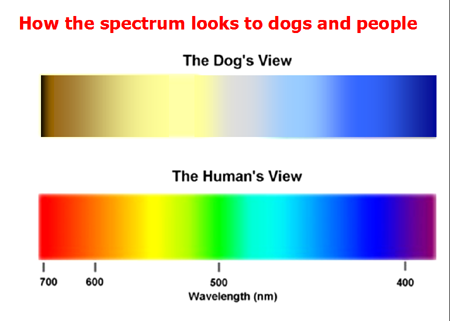

Color spectrum - effects what colors you can see, starting from greyscale and then into uv and beyond

Depth perception - effects how detailed a shape is, you start from barly being able to tell where the head of a creature is into seeing a human level of detail

Eye sensitivity - the more sensitive you make your eyes the better you can see In the dark, but the more you’ll be blind in the light to the point you can’t see anything in the daytime.

Sound

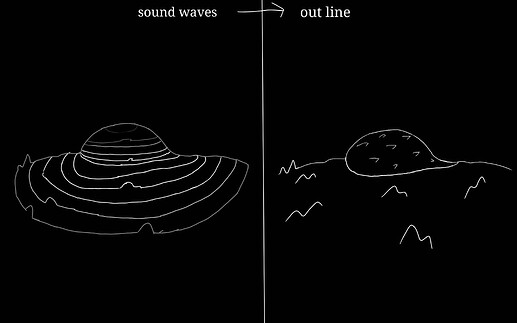

The discussion I’ve seen on this is pretty much do things like this https://i.stack.imgur.com/MXvNT.gif. Which is exactly what I’m suggesting, with lines representing soundwaves going over creature’s and objects. And I think leaving an outline,

would make things easier without having you scream constantly to know where things were. how long this outline lasts would scale with your creature’s memory giving improved brain cells a use beyond just progressing.

Touch

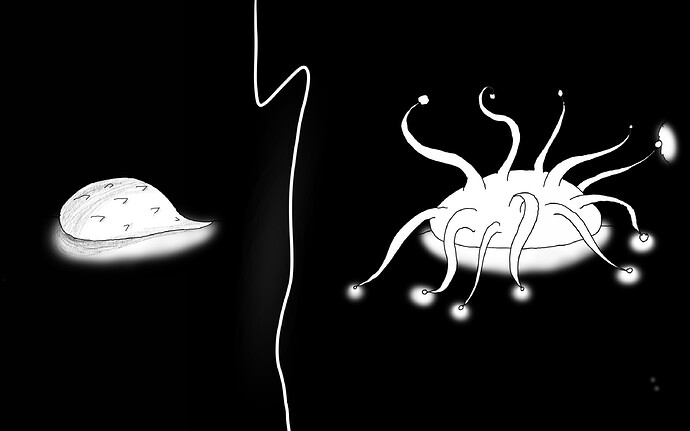

This is the strongest sense the player will start with on average. The sense acts like minecraft blindness in where it illuminates a small area around you

https://static.wikia.nocookie.net/minecraft_gamepedia/images/0/0b/Blindness_View.png/revision/latest?cb=20201108162926 but this version will only have it in black and white and will only illuminate things the player is touching.

The illuminated area could last for abit before fading away

In order for the player to not get turned around

Smell

Smell and taste from what I’ve seen was just thought as maybe a pop-up or line of text but I believe we can go much more in depth then that.

Smell could be represented by having everything be blanketed in colors that represent each Smell, similar to compound clouds, and to keep everything from looking like rainbow barf all the smells will mix into only one color which is more realistic in my opinion.

This color then can be defined into different ones if you use the “sniff” key, the better Smell you have the more the colors will be defined but the more overpowering stronger smells will be.

Taste

Taste is bit more difficult, I think having some text for this would work well, and also making it work like Smell if it had Touch based mechanics would work fine, so licking something would be an alternative to “sniff”. I also think that having each text say something like tastes sweet, gross, yummy ect, would help give insight into what it does to your creature. these texts could then give more details if you know where the tastes are coming from, it could say tastes like x creature if you know the creature smells like that.

the same thing could also work for smell.

Other

Sense layering

Inorder for you to be able to sense things in quickly without always switching between senses they can layer when a “point of interest” pops up, a point of interest is things that will fulfill food, water or other needs of your creature, they also include danger. If a point of interest appears then the sense that picks it up will create a light overlap on the current sense.

Sight and smell with sound

Sight and smell will be overlapped by sound in the same way, with the surroundings darkening and the soundwaves being seen.

Sight with smell

When sight gets overlapped by smell something similar to sound will happen where everything will be lightly colored with smells

Sound & touch with smell

When sound & touch gets overlapped by smell I think only the “point of interest” can be seen inorder to keep the surroundings from being cluttered.

Everything and sight

I think anything that gets overlapped by sight will simply have anything that is “seen” ie. Smelled, heard, touched, will have their real colors shown. I don’t think it would work well with smell Though.

Layering should also be able to be turned off or toggled.

Sense mixing

This is about how the senses could mix in unque ways that is different from layering.

here’s the senses that I think can mix

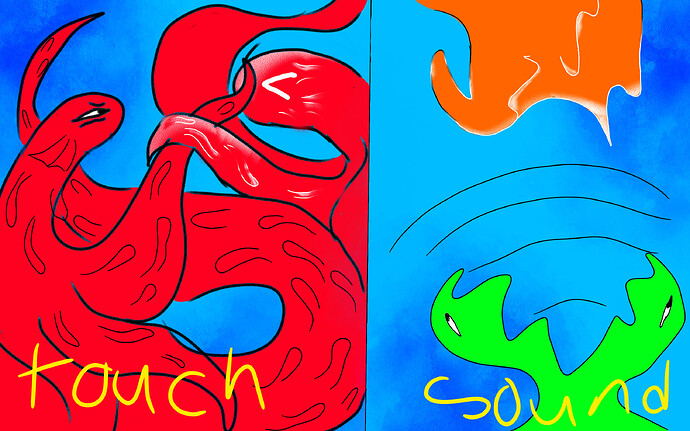

Sight & sound

If you’re creature’s depth perception is below max you can use sound to fill the gap, allowing you to see creatures in detail without having to max out your depth perception.

Touch & sight

Same with sound except it uses touch instead

Sound & touch

Maybe soundwaves that come from the player are thicker?

The illuminated areas that you’re creature left could last longer when a sound wave passes over it? But this requires more thought.